Score Gaps

Which countries over-perform or under-perform relative to their pillar profiles?

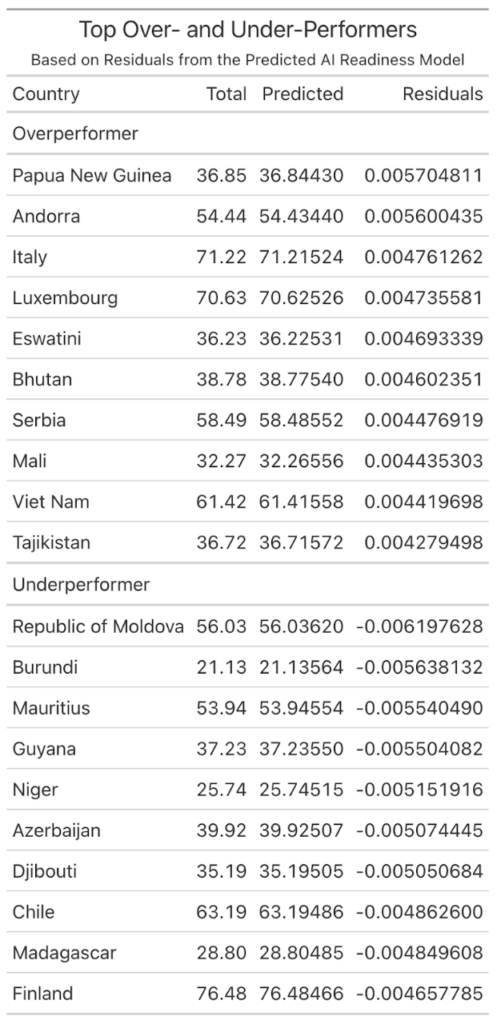

After we make sure which pillar is most important, we want to understand why there are a few countries’ actual performance that are better than (or less than) their pillar features prediction. According to Comunale and Manera’s research, structural indicators alone often fail to capture the social that shape national AI capabilities. To explore this, we create a linear model, use 10 dimensions as predictors, and calculate the residuals for each country. These positive residuals represent “Overperformers”, as the countries’ actual AI readiness score is over expectation. On the other hand, those negative residuals represent “Under Performer”, as those countries’ AI readiness score should be higher than their actual AI readiness score.

This chart compares each country’s actual AI readiness score with the average predicted by its pillar. Countries above the line (blue) over-perform, while those below the line (red) under-perform relative to their structural capacity.

This part of the analysis provides us with a very interesting feature: these “Over Performers” typically have the same characteristics, such as a powerful national AI strategy, high efficiency public management, cultural familiarity with new technologies, or targeted investment that can reinforce their advantages. At the same time, those “Underperformers” might be facing an unstable political, fragmented digital environment, the inequality problem left by history, or a mismatch between policy objectives and actual capabilities. Hassan emphasizes that failures in trust and organizational readiness can prevent AI systems from scaling beyond pilot projects, which mirrors the underperformance seen in some countries (Hassan, 2024). Although 2 countries have similar features of pillars, they can have different outputs, since AI readiness is influenced by social factors that are not in the dataset, for example, the trust in public institutions, and their attitude to the policy of new technology. These patterns remind us, while pillars measure the structural capacity, the performance also depends on social dynamics.

In order to isolate the top ten overperformers and underperformers, we used a simple linear model to predict each country’s total score based on their dimensions and found their corresponding residuals. Then, we sorted the residuals of each country and identified the countries with the largest positive residuals (overperformers) and the countries with the largest negative residuals (underperformers). The top ten overperformers were Papua New Guinea, Andorra, Italy, Luxembourg, Eswatini, Bhutan, Serbia, Mali, Viet Nam, and Tajikistan. The top underperformers were the Republic of Moldova, Burundi, Mauritius, Guyana, Niger, Azerbaijan, Djibouti, Chile, Madagascar, and Finland.